Why this post?

In recent past in my interaction with couple of college students and other technology professionals I could see that there is some confusion about virtualization and related technologies. Though many knows the tools and end options but again confused by the technology behind. So I decided to write a series of blog posts providing theoretical details about virtualization and cloud computing. What it is and how it works. Hope this would help others to clear some confusion at the basic level, specially my young friends in college and freshers in IT industry.

What is Virtualization??

Virtualization is a broad term that refers to the abstraction of resources across many aspects of computing.

Even something as simple as partitioning a hard drive is considered virtualization because you take one drive and

partition it to create two separate hard drives. Devices, applications and human users are able to interact with the virtual resource as if it were a real single

logical resource.

History of Virtualization:

IBM first introduced virtualization around 1960's in their S/360 mainframe systems. For a long time it was limited to mainframe systems only.

In the late 1990's VMware introduced virtualization in x86 environment and popularized the Virtualization industry that we see today.

The success of VMware and popularization of virtualization was because of its application in x86 server segment. x86 servers are much much cheaper than mainframe or its counterpart of RISC systems. Also as time grew more and more powerful x86 servers were developed leading to the scope and success of x86 virtualization. For example consider the following scenario:

A file server or normal mail server was created years back which required 2 to 4 single cpu and 4 to 8 GB of RAM. In the older days a single x86 server would require to power this requirement. But today we have servers with 4 sockets with 12 cores each (essentially a single server with 48 physical cores with each core of say 2.4 Ghz processing power) and Physical Memory of a server (RAM) can be say 2TB. As easily can be seen that this server clearly can provide power to many many such servers.

So the following two facts led to the virtualization revolution

a) Cheaper x86 server availability

b) More and more powerful servers making them suitable to host many servers in a single server.

There are many other benefits of virtualization which I will discuss later on this post, but initially these two factors increased the adoption.

Why Virtualization??

Let's have a look at the reasons why someone would try virtualization:

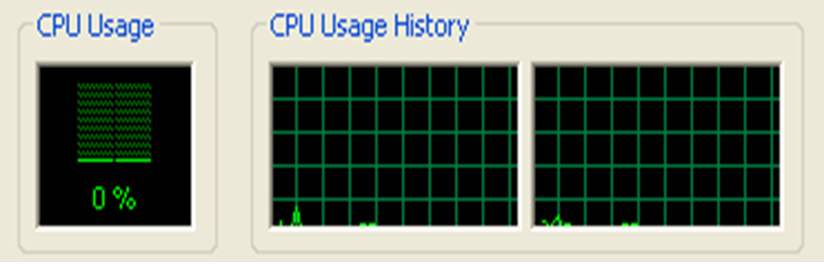

- Traditionally the 90% of the applications run on the servers were either designed long back when the servers did not have this much power or does not require much CPU cycles to run. So if you look at the CPU utilization of most of the traditional servers it falls below say 10% or 20% of the total capacity (except those applications requiring more processing power. Everything in a datacenter costs money and this way the costly CPU power of most of the servers are underutilized.

- A heterogeneous environment is always hard to maintain.

- In today's environment business continuity is most important factor in designing datacenters.

- There are many disadvantages and challenges in traditional disaster recovery which is solved in virtualized environment.

- Virtualization provides benefits like better reliability and control which leads to new SLA's like 5 nines (99.999% ) availability

Virtualization directly or indirectly solves the above problems. But mainly virtualization became popular because of the consolidation of many servers in one server and the saving achieved due to the fact.

In Part-B of this series I would cover the different types of virtualization and how they are different from each other. In the Part-C, I would cover the more advanced and in-depth topics of how actually virtualization works on a physical Hardware.

Till then ponder over the below question?

If I give a shutdown command in any OS it will power down the physical hardware. Now in virtualized environment also we run many OS and when a command is given in any such OS it is essentially run on the physical CPU. So ideally it should power down the entire environment. Which it does not. Why and how? This is the main question and defines how different virtualization technologies handles this question and how they are different.

I am going to answer this interesting question in Part-C. Till then.......